-

statistics - Inter-rater agreement in Python (Cohen's Kappa) - Stack

statistics - Inter-rater agreement in Python (Cohen's Kappa) - Stack

-

agreement (kappa)

agreement (kappa)

-

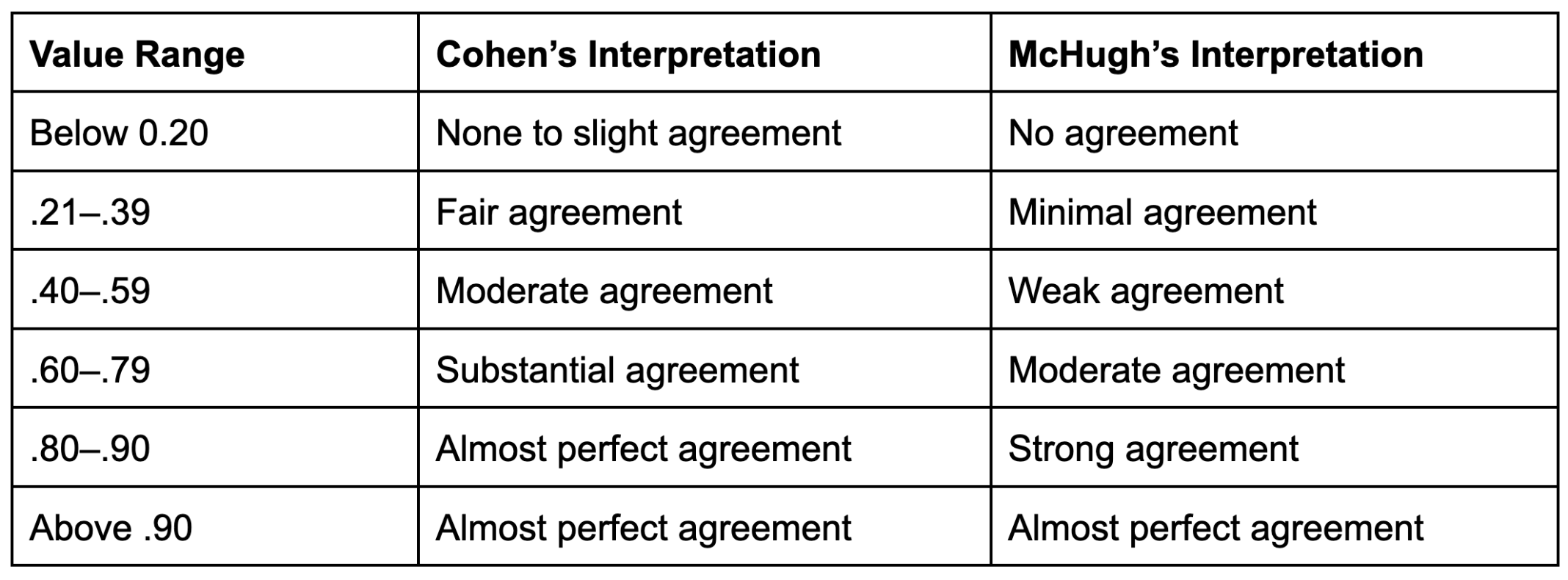

Interpretation guidelines for values for inter-rater reliability. | Download Table

Interpretation guidelines for values for inter-rater reliability. | Download Table

-

Percentage agreement and Cohen's Kappa measure of rater reliability | Scientific Diagram

Percentage agreement and Cohen's Kappa measure of rater reliability | Scientific Diagram

-

What is Kappa and How It Measure Inter-rater Reliability?

What is Kappa and How It Measure Inter-rater Reliability?

-

Inter-Rater Reliability: Kappa and Intraclass Correlation Coefficient - Professional Statistician For Hire

Inter-Rater Reliability: Kappa and Intraclass Correlation Coefficient - Professional Statistician For Hire

-

Reliability - Kappa, ICC, Pearson, Alpha - Concepts Hacked

Reliability - Kappa, ICC, Pearson, Alpha - Concepts Hacked

-

Inter-rater -

Inter-rater -

-

An Introduction Cohen's Kappa and Reliability

An Introduction Cohen's Kappa and Reliability

-

Interrater reliability: kappa statistic | Semantic Scholar

Interrater reliability: kappa statistic | Semantic Scholar

-

Cohen's Kappa | Real Statistics Using

Cohen's Kappa | Real Statistics Using

-

Inter-rater -

Inter-rater -

-

Interrater reliability: kappa statistic | Semantic Scholar

Interrater reliability: kappa statistic | Semantic Scholar

-

Qualitative Coding: reliability vs Agreement YouTube

Qualitative Coding: reliability vs Agreement YouTube

-

Interrater agreement and interrater reliability: Key concepts, approaches, and applications -

Interrater agreement and interrater reliability: Key concepts, approaches, and applications -

-

the kappa statistic - Biochemia Medica

the kappa statistic - Biochemia Medica

-

the kappa statistic - Biochemia Medica

the kappa statistic - Biochemia Medica

-

Fleiss' and rater interpretation | Download Table

Fleiss' and rater interpretation | Download Table

-

reliability -

reliability -

-

![PDF] Understanding interobserver agreement: the kappa statistic. Semantic Scholar PDF] Understanding interobserver agreement: the kappa statistic. Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/e45dcfc0a65096bdc5b19d00e4243df089b19579/3-Table3-1.png) PDF] Understanding interobserver agreement: the kappa statistic. Semantic Scholar

PDF] Understanding interobserver agreement: the kappa statistic. Semantic Scholar

-

Interpretation guidelines for values for inter-rater reliability. | Download Table

Interpretation guidelines for values for inter-rater reliability. | Download Table

-

What Inter-rater/ Intercoder Reliability Qualitative Research? How Achieve it? - YouTube

What Inter-rater/ Intercoder Reliability Qualitative Research? How Achieve it? - YouTube

-

What is Inter-rater Reliability? (Definition &

What is Inter-rater Reliability? (Definition &

-

Inter-Rater Examples & Assessing Statistics By Jim

Inter-Rater Examples & Assessing Statistics By Jim

-

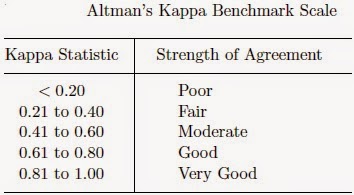

K. Inter-Rater Reliability Blog : Benchmarking Agreement CoefficientsInter-rater Cohen kappa, AC1/AC2, Krippendorff Alpha

K. Inter-Rater Reliability Blog : Benchmarking Agreement CoefficientsInter-rater Cohen kappa, AC1/AC2, Krippendorff Alpha

-

Weighted Cohen's Kappa | Real Using Excel

Weighted Cohen's Kappa | Real Using Excel